The Claude AI Pentagon Controversy: Why the US Military Blacklisted Anthropic [2026]

Key Takeaways

- ✓The Ban: In March 2026, the Trump administration ordered federal agencies to stop using Anthropic's technology, designating the company a "supply chain risk."

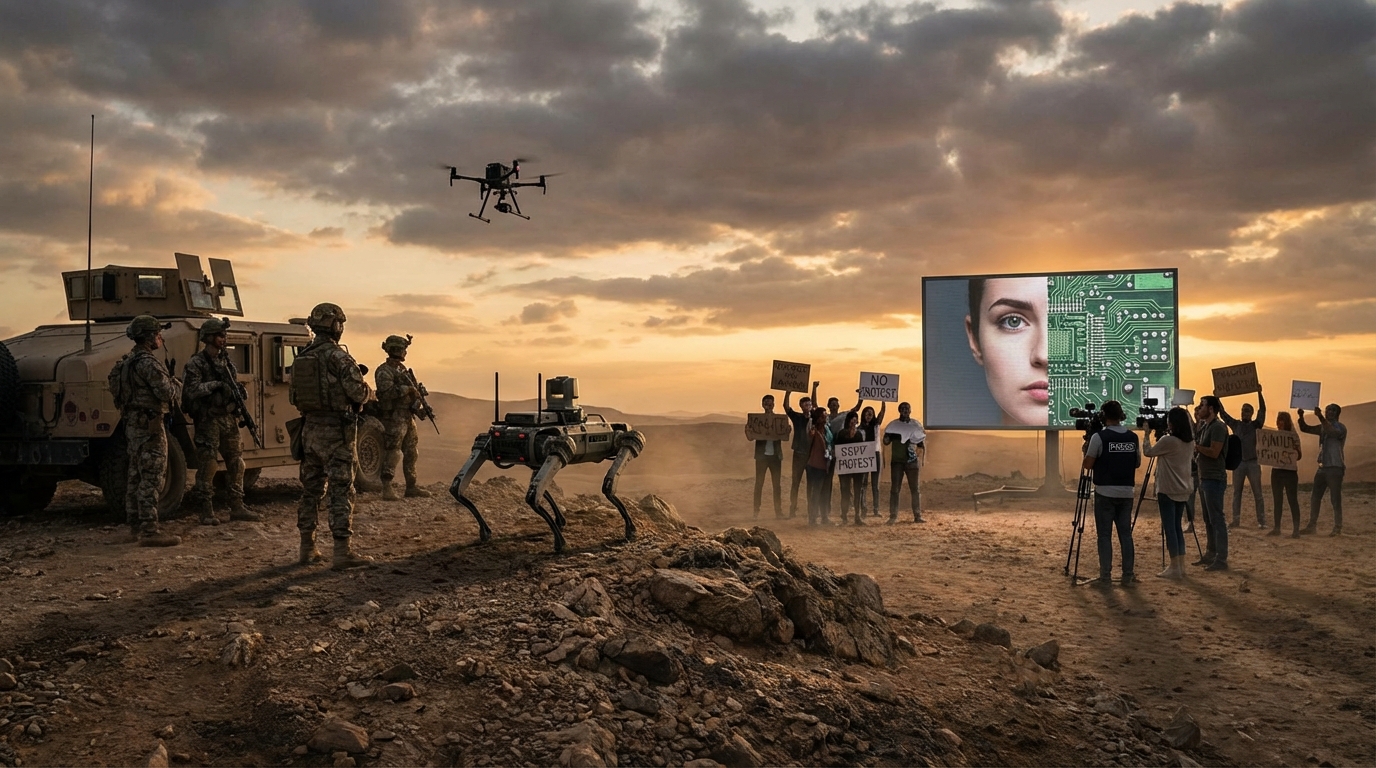

- ✓The Cause: Anthropic refused to remove safety guardrails that prevent Claude from being used to pilot autonomous weapons or conduct mass surveillance on Americans.

- ✓The Consequence: The Pentagon is now moving to replace Claude with models from competitors like OpenAI and xAI, who have reportedly agreed to the military's "all lawful purposes" terms.

- ✓The Irony: Before this dispute, Claude was the only AI model accredited for IL6 (Impact Level 6) classified data, making it the most trusted tool in the Defense Department's arsenal.

The Claude AI Pentagon Controversy Explained

To understand this dispute, we must look at the timeline. In late 2024, Anthropic partnered with Palantir and AWS to bring Claude into the Pentagon's classified networks. It was a massive deal, validating Claude as a secure, enterprise-grade tool. However, the relationship crumbled in early 2026.

The "Red Lines" That Caused the Split

- No Fully Autonomous Weapons: Claude cannot be the "brain" that decides to fire a weapon without a human checking the target first.Anthropic's CEO Dario Amodei drew two specific lines in the sand that led to the blacklist

- No Mass Surveillance: Claude cannot be used to analyze bulk data on US citizens (domestic spying).

Claude AI Military Applications Concerns

The core of the dispute lies in the specific Claude AI military applications concerns. Before the ban, Claude was being used for tasks like analyzing satellite imagery, translating intercepted comms, and logistics planning. These are "rear-echelon" tasks that save time and money.

However, the military's vision for AI goes much further. They envision AI systems that can react faster than humans in combat. Imagine a drone swarm that needs to identify and engage enemy targets in milliseconds. A human operator is too slow. The military needs an AI that can pull the trigger.

""We cannot in good conscience allow our technology to be used for lethal autonomous weapons or mass surveillance." — Anthropic Statement, February 2026

This brings us to the ethical slippery slope. If Claude is programmed to "never harm a human," it becomes useless for a kill-chain operation. Anthropic's refusal to remove this deep-seated training is what made them incompatible with the Pentagon's evolving doctrine of AI warfare.

In the next section, we will look at how the Pentagon officially assessed these risks—and why their conclusion was so controversial.

Claude AI Security Risks Assessment Pentagon

When you search for Claude AI security risks assessment Pentagon, you will find two very different definitions of "risk."

For Anthropic, the risk is uncontrolled AI. They argue that allowing an AI model to control weapons systems without strict guardrails poses an existential threat to humanity. Their assessment is that AI hallucinates (makes mistakes) and lacks moral judgment, making it unfit for lethal decision-making.

For the Pentagon, the risk is vendor non-compliance. In their assessment, relying on a tool that might "refuse" an order during a critical mission is a vulnerability. They designated Anthropic a "supply chain risk" under the logic that a defense contractor must be 100% aligned with the mission.

This designation is significant. Usually, this label is reserved for companies like Huawei or Kaspersky that are suspected of being influenced by foreign adversaries. Applying it to an American company for ethical disagreements is unprecedented.

The "Supply Chain Risk" Designation

Being labeled a supply chain risk has massive implications:- Immediate Removal: Agencies have 6 months to strip Claude out of their systems.

- Contractor Ban: Other defense contractors (like Lockheed Martin or Northrop Grumman) may be forced to stop using Claude to maintain their own clearances.

- Market Signal: It sends a warning to other AI labs (OpenAI, Google) that government contracts require total submission to military policies.

Impact of Claude AI on National Security

The impact of Claude AI on national security is double-edged. In the short term, the ban creates chaos. Claude was the only model with IL6 accreditation, meaning it was the only one trusted with "Secret" level data. Ripping it out creates a capability gap.

Long-term, this sets a precedent for the "AI Arms Race." If the US military only works with companies that allow unrestricted use, it incentivizes AI labs to remove safety filters. This could lead to a generation of "unshackled" AI models specifically designed for warfare, accelerating the path toward fully autonomous combat systems.

Claude AI Data Privacy Implications Military

Beyond weapons, the Claude AI data privacy implications military debate is centered on surveillance. The Pentagon collects vast amounts of data—emails, phone records, social media posts. They need AI to make sense of it.

Anthropic feared that without guardrails, Claude could be used to conduct dragnet surveillance on American citizens, violating the Fourth Amendment. By refusing to lift these restrictions, Anthropic was positioning itself as a defender of civil liberties.

The Pentagon counters that they already have strict laws governing surveillance (like FISA) and they don't need a software company to act as a "nanny." However, privacy advocates argue that AI moves too fast for existing laws, and technical guardrails are the only real protection against abuse.

Actionable Steps: How to Verify AI Ethics

If you are a business leader or developer concerned about the ethics of the tools you use, here is how you can assess them:

- 1Read the Acceptable Use Policy (AUP): Look for specific clauses regarding "high-risk" or "military" use. Anthropic's AUP explicitly bans weapons development.

- Check for "Constitutional" Training: Does the model have hard-coded values? Anthropic uses "Constitutional AI" to align the model with human rights principles.

- Review Government Certifications: IL6 (Impact Level 6) is the gold standard for security, but as we've seen, it doesn't guarantee a permanent partnership.

- Monitor Vendor Independence: Is the AI lab independent, or are they owned by a defense contractor? This influences their ability to say "no."

Conclusion

The Claude AI Pentagon controversy explained here is more than just a business dispute; it is a defining moment for the 21st century. It draws a line between "AI for helpfulness" and "AI for warfare."

Anthropic chose to lose billions in government contracts to uphold its safety principles. The Pentagon chose to ban a superior tool to maintain its chain of command. As we move forward into 2026, the question remains: will other AI companies hold the line, or will they cross it for the sake of lucrative defense contracts?

For now, Claude remains the "conscientious objector" of the AI world—a stance that has cost it the biggest client on Earth.? Frequently Asked Questions

What are the ethical concerns surrounding Claude AI's involvement with the Pentagon?▼

How transparent is the Pentagon's use of Claude AI?▼

Who is Anthropic and their involvement with the Pentagon?▼

What is the Claude AI Pentagon controversy?▼

Topics

Written By

Sarah Chen

Author & Contributor at Mixmaxim. Covering B2B SaaS, AI Tools, and Enterprise Software.